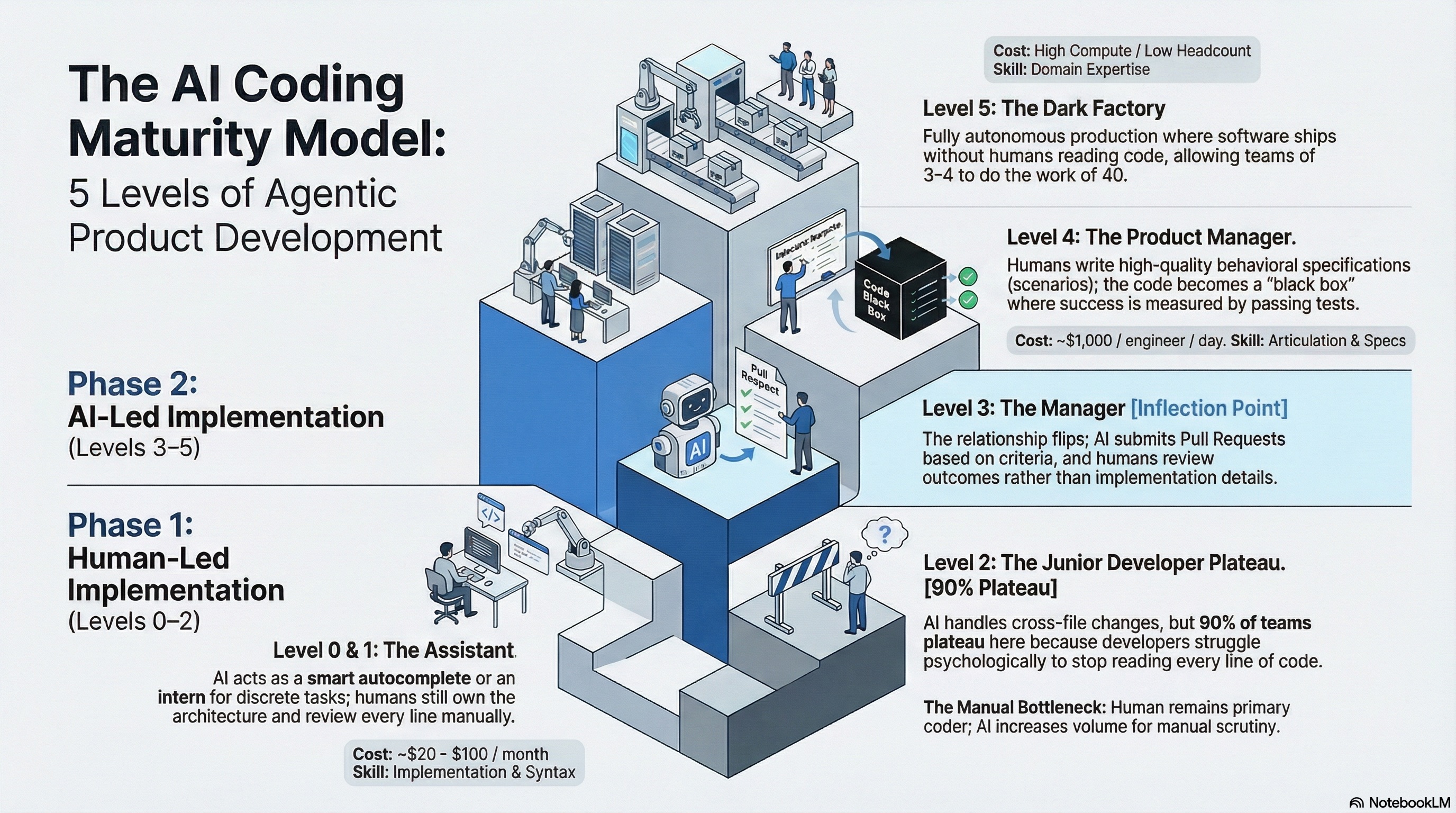

The software industry is undergoing a shift more profound than the move from waterfall to agile. Many of our clients are attempting to accelerate their transition to AI assisted and agentic AI software development. AI isn’t just changing how developers write code — it’s redefining what a developer is. But not every organization is moving at the same pace. This is the first in a series of two blog posts centered around AI’s role in a software product company’s development pipeline.

This framework gives you a concrete way to assess where your team sits today, why you might be stuck, and what it takes to move forward. Most organizations believe they are further along than they are. Here’s how to be honest with yourself.

Level 0 — The Autocomplete Era

What it looks like: AI suggests the next line of code. Think standard GitHub Copilot or similar inline suggestion tools.

Human role: You are still writing the software. AI is reducing keystrokes, not making decisions.

You’re here if:

- Your team uses AI primarily as a smarter autocomplete

- Developers rarely accept multi-line or multi-function suggestions

- No process changes have been made to accommodate AI

The trap: Many teams install Copilot, call themselves “AI-enabled,” and stop here.

Level 1 — The Coding Intern

What it looks like: You hand the AI a discrete, well-scoped task — “write this function,” “refactor this module” — and it delivers a result you review and integrate.

Human role: Architecture, judgment, and integration remain entirely with humans. Every line is manually reviewed.

You’re here if:

- Developers use AI for isolated tasks but own the integration

- Prompts are specific and tightly bounded

- Code review is still line-by-line, 100% of the time

The trap: This feels productive, but you’re still doing the hard coordination work yourself.

Level 2 — The Junior Developer

What it looks like: AI handles multi-file changes, understands dependencies across modules, and navigates complex codebases with context.

Human role: You’re still reading all the code — just at higher volume. You’re reviewing AI output more than writing.

You’re here if:

- You use tools like Cursor, Aider, or Claude Code for cross-file tasks

- Your team reviews AI-generated PRs but scrutinizes every line

- Developers feel uncomfortable not reading the code

The Sticking Point: This is where 90% of “AI-native” developers currently plateau. The bottleneck isn’t capability — it’s psychology. Developers who built their identity around writing good code find it deeply uncomfortable to stop reading it.

Level 3 — The Manager (The Inflection Point)

What it looks like: The relationship flips. The AI implements features and submits Pull Requests. You are no longer in the code — you’re directing the agent and approving or rejecting at the PR level.

Human role: Setting direction, reviewing outcomes, not implementation.

You’re here if:

- AI agents autonomously open PRs against acceptance criteria

- Developers review what was built, not how it was built

- Your team has explicitly accepted the psychological challenge of letting go

The trap: This level requires a genuine organizational and cultural shift. Teams that try to move to Level 3 while keeping Level 2 habits will fail. The J-Curve productivity dip is real here — teams get slower before they get faster.

Diagnostic question: When a PR comes in from your AI agent, do your engineers read every line — or do they ask “does it meet the spec and pass the scenarios?”

Level 4 — The Product Manager

What it looks like: A human writes a specification. The AI builds it. The human returns to check whether the defined scenarios pass. The code itself is a black box.

Human role: Writing high-quality specifications and evaluating behavioral outcomes. The new core skill is articulation, not implementation. It’s the same rule that stands today – the degree to which a product manager can articulate the desired outcome to human engineers has a direct implication on a product’s success.

You’re here if:

- Your team writes external behavioral specifications (“scenarios”) separate from the codebase

- AI is never shown the evaluation criteria, preventing it from “teaching to the test”

- Headcount has visibly shifted away from engineers toward spec writers and domain experts

Key metric: If your team is spending ~$1,000 per engineer per day on compute and tokens, you’re operating at this level. If you’re on a $20/month or even the $100/month seat license — you’re almost certainly at Level 0 or 1.

Level 5 — The Dark Factory

What it looks like: Fully autonomous software production. Specification in results in working software out. No human writes or reviews code.

Human role: Defining what to build and evaluating whether it works. The factory runs itself.

You’re here if:

- Software ships without any human reading the implementation

- Your organization has radically flattened (teams of 3–4 generating what once required 30–40)

- The coordination layer — Scrum masters, release managers, middle management — has been structurally eliminated

This is the frontier. No organizations are operating at scale here today but will more than likely change. We believe we will see many companies operating at scale here within a few years.

The Talent Reckoning: What This Means for Your Team

The maturity level your organization operates at directly predicts the talent strategy you need:

- Entry-level roles are declining fast. U.S. junior developer job postings are down 67%. AI handles what interns once did.

- Generalists beat specialists. When AI handles implementation, human value concentrates in domain expertise and systems thinking — not language-specific skills.

- The new residency model: Progressive organizations are training junior talent by having them review and direct AI output rather than write code from scratch — a fundamentally different skill set.

The Bottom Line

Most organizations are at Level 2, believe they are at Level 3, and are nowhere near Level 4 or 5.

The gap isn’t technical — the tools exist today to move to Level 4. The gap is organizational and psychological - the willingness to redesign workflows, restructure teams, and let go of code review as the primary quality gate.

The organizations that navigate this transition will operate with radically smaller teams, dramatically lower coordination overhead, and compounding productivity advantages. The ones that don’t will spend the next three years getting slower while believing they are getting faster.

The question isn’t whether the Dark Factory is coming. It’s whether you’ll be running one at an acceptable level — or competing against an agent-first company.

AKF helps companies scale their processes and architecture. Contact us - we would love to partner.